Hi there 👋 Have you ever found yourself in a situation where you started to discuss how to evaluate Universal Design and ended up discussing ramps and other accessibility features? I have. Many times.

An example: I have been part of several research activities where we went out to explore norms and participation in the city, and ended up talking about the cracks between cobblestones or the steps to get into that inaccessible shop. While this certainly is important, we already know a lot about these things and how to solve them, but we still haven’t found the keys to, for instance, move beyond “us” and “them” in the city.

This leads me to a question I’ve been struggling with for years: Can or should Universal Design actually be evaluated?

The short answer is that it is contested. A growing body of scholarship argues that reducing UD to metrics fundamentally betrays its original mission.

Meanwhile, others have developed certification systems and standards, treating UD more like a compliance framework.

But there may be a third path: not rejecting benchmarking, but relocating it. What if we've been trying to evaluate the wrong things? Not because evaluation is impossible, but because we've been looking in the wrong place?

What Currently Gets Evaluated

When the Center for Universal Design at NC State released the 7 Principles of Universal Design in 1997, the framework was intended to "evaluate existing designs, guide the design process, and educate both designers and consumers." [1] What it explicitly did not include were metrics, standards, or benchmarks.

Edward Steinfeld and Jordana Maisel, recognising these (and other) limitations, developed The 8 Goals of Universal Design in their 2012 book Universal Design: Creating Inclusive Environments. Their explicit aim was to "define the outcomes of UD practice in ways that can be measured and applied to all design domains." [2]

A landscape of assessment instruments and standards now exists, though each operationalises UD differently. What these evaluate varies a lot, from contrast levels and physical dimensions to user satisfaction scores and organisational process compliance. Few systematically assess social integration, dignity, or equity.

The most sustained critique comes from scholars who argue that UD's original purpose was about societal transformation, not technical specification, and that metrics reduce a social justice movement to a compliance exercise [3, 4].

But what if the problem isn't evaluation itself, but what we've chosen to evaluate?

Unburdening Universal Design From Compliance

In a recent paper that I wrote together with Agneta Ståhl and Susanne Iwarsson, we argue that:

UD was originally conceptualised as being about societal attitudes, democracy, citizenship, equity, flexibility and social inclusion. Consequently, if Universal Design at all should be evaluated, it ought to be done on the premises of how such factors are assessed and not based on operationalisations of accessibility and usability. [5]

UD operates at a different level. The question isn't "how well does this environment meet accessibility standards?" or "can people use it effectively?" The question is "what kind of society are we building?" (See Nonclusive by Design #8 for more on this conceptualisation).

This framing opens a door: if UD is about societal impact, perhaps that's what we should be evaluating?

The practical challenge then becomes: How do you evaluate attitude change, democratic participation, and equity? That's genuinely difficult, but it's the right difficult question. Measuring ramp gradients is the wrong easy question.

We already evaluate societal values, such as peace, sustainability, and national "goodness", through thoughtful proxy indicators.

The Positive Peace Index [6] is particularly apt. Peace may sound impossible to evaluate, yet it has been operationalised through eight interconnected pillars: Well-Functioning Government, Sound Business Environment, Equitable Distribution of Resources, Acceptance of the Rights of Others, Good Relations with Neighbours, Free Flow of Information, High Levels of Human Capital, and Low Levels of Corruption.

Importantly, none of those pillars is peace - they're conditions that make peace possible and indicators that peace exists. The same logic could apply to UD: what conditions make Universal Design possible, and what indicators suggest it exists?

The Universal Design Benchmark Matrix

Rather than abandoning benchmarking or accepting its current limitations, our recent work suggests a third path: relocating the benchmarking debate and what we evaluate.

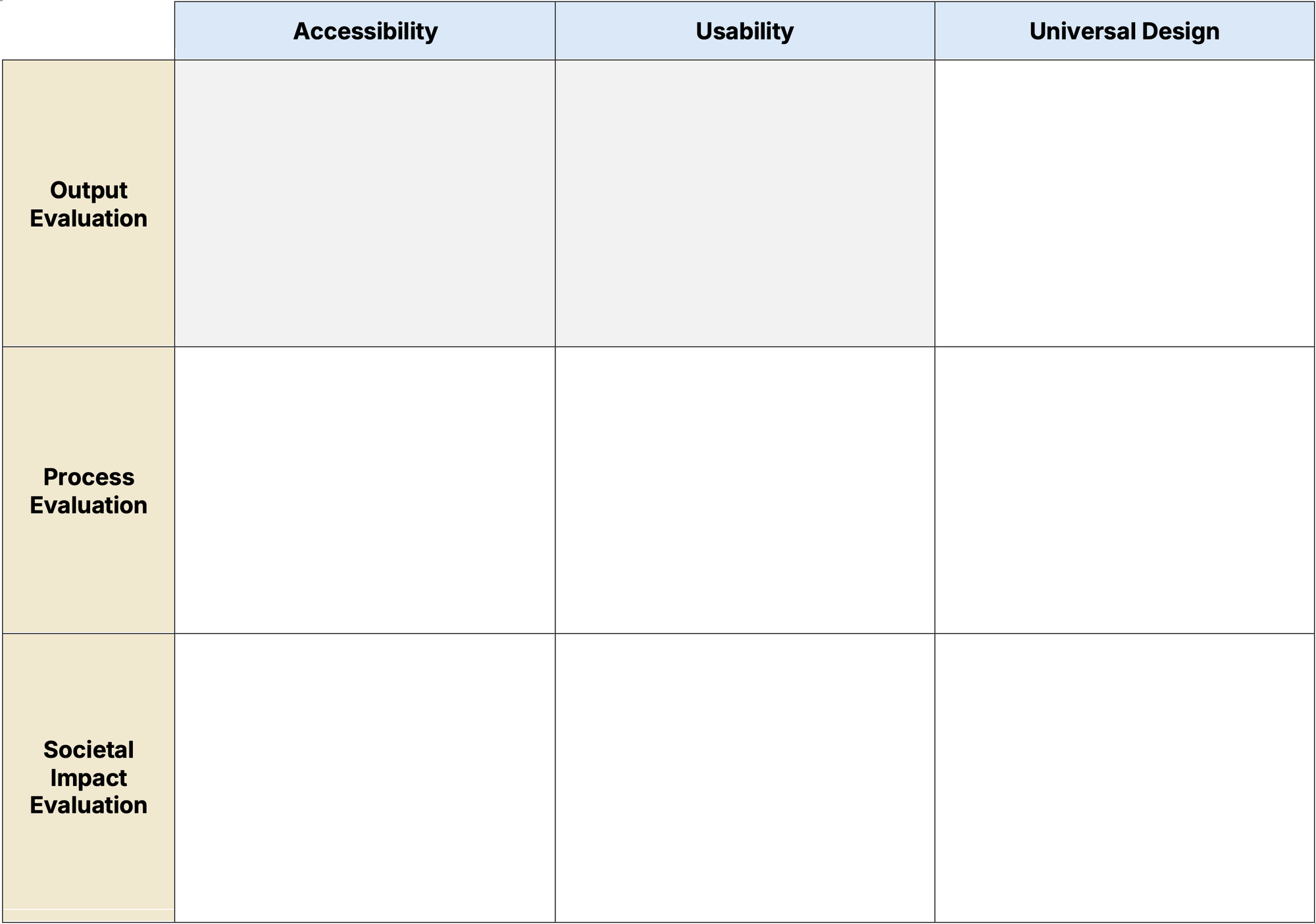

During the last year, we’ve started developing and testing a matrix (Figure 1) based on the main argument from our 2025 article, that Accessibility, Usability, and Universal Design are complementary but distinct concepts. All three concepts are needed, since they capture different things [5]. The matrix doesn't reject evaluation. It asks us to evaluate what UD was actually about: societal attitudes, democracy, citizenship, and equity.

The matrix is a thinking tool, not a compliance checklist. The 3×3 matrix distinguishes between:

- What we're evaluating (columns): Accessibility, Usability, Universal Design.

- How we're evaluating it (rows): Output Evaluation, Process Evaluation, Societal Impact Evaluation.

The conceptual move is significant. Current "UD evaluation" typically happens in the output evaluation row, assessing whether environments meet standards or whether products are usable. But that's mainly an accessibility and usability evaluation.

There is also a long-term dimension to this. While progress is being made, without benchmarking at the right level, it remains difficult to demonstrate and even harder to build on. Benchmarking doesn't just mean evaluating at a single point in time. It means tracking change over time. Whatever you choose to benchmark, you commit to building a longitudinal dataset around it. If you benchmark ramp gradients, you will accumulate years of data about ramp gradients. Relocate it to the societal impact level, and you start accumulating data about citizenship and equity instead.

To me, the lower right corner: “Universal Design” & “Societal Impact Evaluation”, is perhaps the most interesting one, since this is where Universal Design evaluation actually belongs. This is also where a lot of what I write about in these newsletters, such as nonclusion and belonging, live. (What would you put here?)

We did some early testing of the matrix in the Syntax of Equality project. These are some examples of what the participants put in the lower right corner: trust in other people, democratic participation, the experience of the city, social sustainability accounting, and "for more people, not more [stuff]”.

The matrix seems to open up productive thinking. What you see here is an early version, a thinking tool we're still developing, which is precisely why I now turn to you for your help.

Your Turn

I am curious about what you think about the matrix. Does it make sense to you? What would you evaluate?

I'd love to hear your thoughts, in a comment here, a PM, or via the online form or Word version linked below. You are exactly the ones who know what belongs in each cell.

I hope that this will lead to an exciting material to return to in one of the coming newsletter editions, and perhaps even a paper for the UD2026 conference.

Please feel free to comment in Swedish or English.

Online Matrix form: https://form.jotform.com/260354338309053.

Word version (downloadable):

Let's keep this discussion going 🌸

Notes and References

This piece builds on findings from The Syntax of Equality project, where we investigate situation-based categorisation and nonclusive design through citizen science and field observations.

Do you want to use the photos or illustrations in a publication, presentation, or video? Go ahead, and please tell me how you use them and what you learn 👍

References:

- Connell, R. B., Jones, M., Mace, R., Mueller, J., Mullick, A., Ostroff, E., Sanford, J., Steinfeld, E., Story, M., & Vanderheiden, G. (1997). The Principles of Universal Design. https://design.ncsu.edu/research/center-for-universal-design/

- Steinfeld, E., & Maisel, J. L. (2012). Universal design: Creating inclusive environments. John Wiley & Sons Inc.

- Imrie, R. (2012). Universalism, universal design and equitable access to the built environment. Disability and Rehabilitation. https://doi.org/10.3109/09638288.2011.624250

- Hamraie, A. (2017). Building access: Universal design and the politics of disability. University of Minnesota Press.

- Hedvall, P.-O., Ståhl, A., & Iwarsson, S. (2025). Accessibility, usability and universal design – still confusing? Harmonisation of key concepts describing person-environment interaction to create conditions for participation. Disability and Rehabilitation, 1–10. https://doi.org/10.1080/09638288.2025.2491831

- Positive Peace Index, by Institute of Economics & Peace, https://www.visionofhumanity.org/.